Docker

Introduction

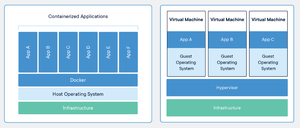

Docker is a set of platform as a service products that use OS-level virtualization to deliver software in packages called containers. Containers are isolated from one another and bundle their own software, libraries and configuration files but they can communicate with each other through well-defined channels.

https://www.docker.com - Official Web Site.

https://hub.docker.com - Official Repository of Container Images.

It was originally developed for programmers to test their software but has now become a fully fledged answer to running servers in mission critical situations.

Each container has a mini operating system plus the software needed to run the program you want, and no more.

All of the 'hard work' for a piece of software has been 'done for you' and the end result is starting a program with one command line.

For example, the WordPress image contains the LAP part of LAMP (Linux + Apache + PHP) all configured and running.

Images

Useful Wiki

Installation

WINDOWS

- Make sure your computer supports Hardware Virtualisation by checking in the BIOS.

- Install Docker Desktop.

- Install the Windows Subsystem for Linux Kernel Update.

- Reboot.

LINUX

New All In One Official Method

sudo -i curl -fsSL https://get.docker.com | sh

Engine

This will remove the old version of 'Docker' and install the new version 'Docker CE'...

sudo apt-get remove docker docker-engine docker.io containerd runc sudo apt-get update sudo apt-get -y install apt-transport-https ca-certificates curl gnupg software-properties-common sudo mkdir -p /etc/apt/keyrings curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /etc/apt/keyrings/docker.gpg echo "deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null sudo apt-get update sudo apt-get -y install docker-ce docker-ce-cli containerd.io docker-compose-plugin sudo service docker start sudo docker run hello-world

https://docs.docker.com/install/linux/docker-ce/ubuntu/#install-docker-ce-1

Compose From Command Line

http://composerize.com - Turns docker run commands into docker-compose files!

Install composerize and convert your own commands locally.

sudo apt instal npm sudo npm install composerize -g composerize docker run -p 80:80 -v /var/run/docker.sock:/tmp/docker.sock:ro --restart always --log-opt max-size=1g nginx

https://github.com/magicmark/composerize

Using Ansible

docker-install.yml

- hosts: all

become: yes

tasks:

# Install Docker

# --

#

- name: install prerequisites

apt:

name:

- apt-transport-https

- ca-certificates

- curl

- gnupg-agent

- software-properties-common

update_cache: yes

- name: add apt-key

apt_key:

url: https://download.docker.com/linux/ubuntu/gpg

- name: add docker repo

apt_repository:

repo: deb https://download.docker.com/linux/ubuntu focal stable

- name: install docker

apt:

name:

- docker-ce

- docker-ce-cli

- containerd.io

update_cache: yes

- name: add user permissions

shell: "usermod -aG docker ubuntu"

# Installs Docker SDK

# --

#

- name: install python package manager

apt:

name: python3-pip

- name: install python sdk

become_user: ubuntu

pip:

name:

- docker

- docker-compose

Then run Ansible using your playbook on your server host ...

ansible-playbook docker-install.yml -l 'myserver'

You can then deploy a container using Ansible as well. This will deploy Portainer ...

docker_deploy-portainer.yml

- hosts: all

become: yes

become_user: ubuntu

tasks:

# Create Portainer Volume

# --

#

- name: Create new Volume

community.docker.docker_volume:

name: portainer-data

# Deploy Portainer

# --

#

- name: Deploy Portainer

community.docker.docker_container:

name: portainer

image: "docker.io/portainer/portainer-ce"

ports:

- "9000:9000"

- "9443:9443"

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- portainer-data:/data

restart_policy: always

... with this command ...

ansible-playbook docker_deploy-portainer.yml -l 'myserver'

Moving the Docker Volume Storage Location

Why?

Because you only have a limited space on your root volume and you want to use the extra 100 GB volume you mounted on your Oracle VPS :)

How?

Shut down any containers and the docker services.

systemctl stop docker.socket docker.service containerd.service

Create a directory on your big disk

sudo mkdir /data1/docker

Rsync the current docker directory with the big disk directory

sudo rsync -av /var/lib/docker/ /data1/docker/

Rename the existing docker library directory

sudo mv /var/lib/docker /var/lib/docker.orig

Create a symlink for the 'docker directory' to your big disk directory

sudo ln -s /data1/docker /var/lib/docker

Reboot the server

sudo reboot

Check to make sure all is well after

docker --info docker ps

Now, go create your BTCPay Server container ;-)

Create a symlink to your nice big disk

Usage

Statistics

docker stats docker stats --no-stream

System information

docker system info

Run container

docker run hello-world

List containers

docker container ls docker container ls -a

List container processes

docker ps

docker ps --format "table {{.ID}}\t{{.Names}}\t{{.Image}}\t{{.Ports}}\t{{.Status}}"

List container names

docker ps --format '{{.Names}}'

docker ps -a | awk '{print $NF}'

List volumes

docker volume ls docker volume ls -f dangling=true

List networks

docker network ls

Information about container

docker container inspect container_name or id

Stop container

docker stop container_name

Delete container

docker rm container_name

Delete volumes

docker volume rm volume_name

Delete all unused volumes

docker volume prune

Delete all unused networks

docker network prune

Prune everything unused

docker system prune

Upgrade a stack

docker-compose pull docker-compose up -d

BASH Aliases for use with Docker commands

alias dcd='docker-compose down' alias dcr='docker-compose restart' alias dcu='docker-compose up -d' alias dps='docker ps'

Run Command In Docker Container

e.g.

List the mail queue for a running email server ...

docker exec -it mail.domain.co.uk-mailserver mailq

Setting Memory And CPU Limits In Docker

service:

image: nginx

mem_limit: 256m

mem_reservation: 128M

cpus: 0.5

ports:

- "80:80"

https://www.baeldung.com/ops/docker-memory-limit

Volumes

Multiple Containers

Use volumes which are bind mounted from the host filesystem between multiple containers.

First, create the volume bind mounted to the folder...

docker volume create --driver local --opt type=none --opt device=/path/to/folder --opt o=bind volume_name

Then, use it in your docker compose file...

services:

ftp.domain.uk-nginx:

image: nginx

container_name: ftp.domain.uk-nginx

expose:

- "80"

volumes:

- ./data/etc/nginx:/etc/nginx

- ftp.domain.uk:/usr/share/nginx:ro

environment:

- VIRTUAL_HOST=ftp.domain.uk

networks:

default:

external:

name: nginx-proxy-manager

volumes:

ftp.domain.uk:

external: true

Using volumes in Docker Compose

Networks

Basic Usage

Create your network...

docker network create networkname

Use it in your docker-compose.yml file...

services:

service_name:

image: image_name:latest

restart: always

networks:

- networkname

networks:

networkname:

external: true

https://poopcode.com/join-to-an-existing-network-from-a-docker-container-in-docker-compose/

Advanced Usage

Static IP Address

networks:

traefik:

ipv4_address: 172.19.0.9

backend: null

Force Docker Containers to use a VPN for their Network

Block IP Address Using IPTables

The key here is to make sure you use the -I or INSERT command for the rule so that it is FIRST in the chain.

Block IP addresses from LITHUANIA

iptables -I DOCKER-USER -i eth0 -s 141.98.10.0/24 -j DROP iptables -I DOCKER-USER -i eth0 -s 141.98.11.0/24 -j DROP iptables -L DOCKER-USER --line-numbers Chain DOCKER-USER (1 references) num target prot opt source destination 1 DROP all -- 141.98.11.0/24 anywhere 2 DROP all -- 141.98.10.0/24 anywhere 3 RETURN all -- anywhere anywhere

Display IP Addresses In A Docker Network Sorted Numerically

docker network inspect traefik |jq -r 'map(.Containers[].IPv4Address) []' |sort -t . -k 3,3n -k 4,4n

Docker Compose

Restart Policy

The "no" option has quotes around it...

restart: "no" restart: always restart: on-failure restart: unless-stopped

Management

Cleaning Pruning

sudo docker system df sudo docker system prune cd /etc/cron.daily sudo nano docker-prune #!/bin/bash docker system prune -y sudo chmod +x /etc/cron.daily/docker-prune

https://alexgallacher.com/prune-unused-docker-images-automatically/

Delete All Stopped Docker Containers

docker rm $(docker ps --filter "status=exited" -q)

Updating with Docker Compose

for d in ./*/ ; do (cd "$d" && sudo docker-compose pull && sudo docker-compose --compatibility up -d); done

Logs Logging

If you want to watch the logs in real time, then add the -f or --follow option to your command ...

docker logs nginx --follow

or

docker logs nginx -f --tail 20

After executing docker-compose up, list your running containers:

docker ps

Copy the NAME of the given container and read its logs:

docker logs NAME_OF_THE_CONTAINER -f

To only read the error logs:

docker logs NAME_OF_THE_CONTAINER -f 1>/dev/null

To only read the access logs:

docker logs NAME_OF_THE_CONTAINER -f 2>/dev/null

https://linuxhandbook.com/docker-logging/

To search or grep the logs:

docker logs watchtower 2>&1 | grep 'msg="Found new'

Cleaning Space

Over the last month, a whopping 14Gb of space was being used by /var/lib/docker/overlay2/ and needed a way to safely remove unused data.

Check your space usage...

du -mcsh /var/lib/docker/overlay2 14G /var/lib/docker/overlay2

Check what Docker thinks is being used...

docker system df TYPE TOTAL ACTIVE SIZE RECLAIMABLE Images 36 15 8.368GB 4.491GB (53%) Containers 17 15 70.74MB 286B (0%) Local Volumes 4 2 0B 0B Build Cache 0 0 0B 0B

Clean...

docker system prune docker image prune --all

Check again...

du -mcsh /var/lib/docker/overlay2 9.4G /var/lib/docker/overlay2 docker system df TYPE TOTAL ACTIVE SIZE RECLAIMABLE Images 13 13 4.144GB 144MB (3%) Containers 15 15 70.74MB 0B (0%) Local Volumes 4 2 0B 0B Build Cache 0 0 0B 0B

...job done.

Dockge

Better than Portainer.

A fancy, easy-to-use and reactive docker 'compose.yaml' stack-oriented manager

https://github.com/louislam/dockge

Portainer

https://github.com/portainer/portainer

Server

https://hub.docker.com/r/portainer/portainer-ce

Agent

Portainer uses the Portainer Agent container to communicate with the Portainer Server instance and provide access to the node's resources. So, this is great for a small server (like a Raspberry Pi) where you don't need the full Portainer Server install.

Deployment

Run the following command to deploy the Portainer Agent:

docker run -d -p 9001:9001 --name portainer_agent --restart=always -v /var/run/docker.sock:/var/run/docker.sock -v /var/lib/docker/volumes:/var/lib/docker/volumes portainer/agent:2.11.0

Adding your new environment

Once the agent has been installed you are ready to add the environment to your Portainer Server installation.

From the menu select Environments then click Add environment.

From the Environment type section, select Agent. Since we have already installed the agent you can ignore the sample commands in the Information section.

Name: my-raspberry-pi Environment URL: 192.168.0.106:9001

When you're ready, click Add environment.

Then, on the Portainer Home screen you select your new environment, or server running the Agent, and away you go!

Updating

Portainer

Containers > Select > Stop > Recreate > Pull Latest Image > Start

Watchtowerr

List updates ...

docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower --run-once --log-format pretty --monitor-only

Perform updates ...

docker run --rm -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower --run-once --log-format pretty

Backups

https://github.com/SavageSoftware/portainer-backup

Monitoring

CTop

Press the Q key to stop it...

docker run -ti --name ctop --rm -v /var/run/docker.sock:/var/run/docker.sock wrfly/ctop:latest

Docker Stats

docker stats

DeUnhealth

Restart your unhealthy containers safely.

https://github.com/qdm12/deunhealth

https://www.youtube.com/watch?v=Oeo-mrtwRgE

Dozzle

Dozzle is a small lightweight application with a web based interface to monitor Docker logs. It doesn’t store any log files. It is for live monitoring of your container logs only.

https://github.com/amir20/dozzle

Gotchas

https://sosedoff.com/2016/10/05/docker-gotchas.html

Applications

I have set up my docker containers in a master docker directory with sub-directories for each stack.

docker

|-- backups

`-- stacks

|-- bitwarden

| `-- bwdata

|-- grafana

| `-- data

|-- mailserver

| `-- data

|-- nginx-proxy-manager

| `-- data

`-- portainer

`-- data

Backups

https://github.com/alaub81/backup_docker_scripts

Updates

Tracking

Watchtower

A process for automating Docker container base image updates.

With watchtower you can update the running version of your containerized app simply by pushing a new image to the Docker Hub or your own image registry. Watchtower will pull down your new image, gracefully shut down your existing container and restart it with the same options that were used when it was deployed initially.

First Time Run Once Check Only

This will run and output if there are any updates them stop and remove itself...

docker run --name watchtower -v /var/run/docker.sock:/var/run/docker.sock containrrr/watchtower --run-once --debug --monitor-only --rm

Automated Scheduled Run Daily

This will start the container and schedule a check at 4am every day...

~/watchtower/docker-compose.yml

version: "3"

services:

watchtower:

image: containrrr/watchtower

container_name: watchtower

restart: always

volumes:

- /var/run/docker.sock:/var/run/docker.sock

environment:

- TZ=${TZ}

- WATCHTOWER_DEBUG=true

- WATCHTOWER_MONITOR_ONLY=false

- WATCHTOWER_CLEANUP=true

- WATCHTOWER_LABEL_ENABLE=false

- WATCHTOWER_NOTIFICATIONS=email

- WATCHTOWER_NOTIFICATION_EMAIL_FROM=${EMAIL_FROM}

- WATCHTOWER_NOTIFICATION_EMAIL_TO=${WATCHTOWER_EMAIL_TO}

- WATCHTOWER_NOTIFICATION_EMAIL_SERVER=${SMTP_SERVER}

- WATCHTOWER_NOTIFICATION_EMAIL_SERVER_PORT=${SMTP_PORT}

- WATCHTOWER_NOTIFICATION_EMAIL_SERVER_USER=${SMTP_USER}

- WATCHTOWER_NOTIFICATION_EMAIL_SERVER_PASSWORD=${SMTP_PASSWORD}

- WATCHTOWER_SCHEDULE=0 0 4 * * *

https://containrrr.dev/watchtower/

https://containrrr.dev/watchtower/arguments/#without_updating_containers

https://github.com/containrrr/watchtower

https://www.the-digital-life.com/watchtower/

https://www.youtube.com/watch?v=5lP_pdjcVMo

Updating

You can either ask Watchtower to update the containers automatically for you, or do it manually.

Manually updating when using docker-compose...

cd /path/to/docker/stack/ docker-compose down docker-compose pull docker-compose up -d

Bitwarden

~/vaultwarden/docker-compose.yml

services:

vaultwarden:

image: "vaultwarden/server:latest"

container_name: "vaultwarden"

restart: "always"

networks:

traefik:

ipv4_address: 172.19.0.4

ports:

- "8100:80"

volumes:

- "./data:/data/"

environment:

- "TZ=Europe/London"

- "SIGNUPS_ALLOWED=true"

- "INVITATIONS_ALLOWED=true"

- "WEB_VAULT_ENABLED=true"

labels:

- "traefik.enable=true"

- "traefik.docker.network=traefik"

- "traefik.http.routers.vaultmydomaincom.rule=Host(`vault.mydomain.com`)"

- "traefik.http.routers.vaultmydomaincom.entrypoints=websecure"

- "traefik.http.routers.vaultmydomaincom.tls.certresolver=letsencrypt-gandi"

- "com.centurylinklabs.watchtower.enable=true"

networks:

traefik:

external: true

After you have created an account, signed in and imported your passwords, please change the SIGNUPS_ALLOWED, INVITATIONS_ALLOWED and WEB_VAULT_ENABLED to false.

docker-compose down docker-compose up -d

Check that the Bitwarden container environment has all the variables...

docker exec -it vaultwarden env | sort HOME=/root HOSTNAME=e5f327deb4dd INVITATIONS_ALLOWED=false PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin ROCKET_ENV=staging ROCKET_PORT=80 ROCKET_WORKERS=10 SIGNUPS_ALLOWED=false TERM=xterm TZ=Europe/London WEB_VAULT_ENABLED=false

... and then refresh your web vault page to see it see "404: Not Found" :-)

InfluxDB

You can have InfluxDB on its own but there is little point without something to view the stats so you might as well include InfluxDB in the Grafana stack and start both at the same time... see below :-)

Grafana

Here is a stack in docker-compose which starts both containers in their own network so they can talk to one another. I have exposed ports for InfluxDB and Grafana to the host so I can use them from the internet.

Obviously, put your firewall in place and change the passwords below!

~/grafana/docker-compose.yml

version: "3"

services:

grafana:

image: grafana/grafana

container_name: grafana

restart: always

networks:

- grafana-influxdb-network

ports:

- 3000:3000

volumes:

- ./data/grafana:/var/lib/grafana

environment:

- INFLUXDB_URL=http://influxdb:8086

depends_on:

- influxdb

influxdb:

image: influxdb:1.8.4

container_name: influxdb

restart: always

networks:

- grafana-influxdb-network

ports:

- 8086:8086

volumes:

- ./data/influxdb:/var/lib/influxdb

environment:

- INFLUXDB_DB=grafana

- INFLUXDB_USER=grafana

- INFLUXDB_USER_PASSWORD=password

- INFLUXDB_ADMIN_ENABLED=true

- INFLUXDB_ADMIN_USER=admin

- INFLUXDB_ADMIN_PASSWORD=password

- INFLUXDB_URL=http://influxdb:8086

networks:

grafana-influxdb-network:

external: true

After this, change your Telegraf configuration to point to the new host and change the database it uses to 'grafana'.

Traefik

/root/docker/traefik/docker-compose.yml

services:

traefik:

image: "traefik:latest"

container_name: "traefik"

networks:

traefik:

ipv4_address: 172.19.0.2

ports:

- "80:80"

- "443:443"

volumes:

- "/etc/timezone:/etc/timezone:ro"

- "/etc/localtime:/etc/localtime:ro"

- "./config/traefik/config.yml:/etc/traefik/config.yml:ro"

- "./config/traefik/traefik.yml:/etc/traefik/traefik.yml:ro"

- "./acme/:/acme/"

- "/var/run/docker.sock:/var/run/docker.sock:ro"

- "./logs/:/var/log/"

environment:

- "TZ=Europe/London"

- "AWS_ACCESS_KEY_ID=xxxxxxxxxxxxxxxxxxxxxx"

- "AWS_SECRET_ACCESS_KEY=xxxxxxxxxxxxxxxxxxxxxxxxxxxx"

- "AWS_REGION=eu-west-2"

- "GANDIV5_API_KEY=xxxxxxxxxxxxxxxxx"

labels:

- "traefik.enable=true"

- "traefik.http.routers.traefik-dashboard.rule=Host(`traefik-dashboard.mydomain.com`) && (PathPrefix(`/api`) || PathPrefix(`/dashboard`))"

- "traefik.http.routers.traefik-dashboard.service=api@internal"

- "traefik.http.routers.traefik-dashboard.middlewares=secured"

- "traefik.http.middlewares.secured.chain.middlewares=auth,rate"

- "traefik.http.middlewares.auth.basicauth.users=user-name:$xxxxxxxxxxxxxxxxUlFVbwar4jlRBO1a8K"

- "traefik.http.middlewares.rate.ratelimit.average=100"

- "traefik.http.middlewares.rate.ratelimit.burst=50"

- "com.centurylinklabs.watchtower.enable=true"

restart: "always"

networks:

traefik:

external: true

config/traefik/traefik.yml

accessLog: {}

accesslog:

filePath: "/var/log/access.log"

fields:

names:

StartUTC: drop

log:

filePath: "/var/log/traefik.log"

level: INFO

maxSize: 10

maxBackups: 3

maxAge: 3

compress: true

api:

dashboard: true

providers:

docker:

endpoint: "unix:///var/run/docker.sock"

exposedByDefault: false

network: traefik

file:

filename: /etc/traefik/config.yml

watch: true

entryPoints:

web:

address: ":80"

http:

middlewares:

- "crowdsec-bouncer@file"

redirections:

entryPoint:

to: websecure

scheme: https

websecure:

address: ":443"

http:

middlewares:

- "crowdsec-bouncer@file"

tls:

certResolver: letsencrypt-aws

domains:

- main: "mydomain.co.uk"

sans:

- "*.mydomain.co.uk"

- main: "mydomain.uk"

sans:

- "*.mydomain.uk"

certificatesResolvers:

letsencrypt-http:

acme:

httpChallenge:

entrypoint: web

email: "myname@mydomain.co.uk"

storage: "/acme/letsencrypt-http.json"

letsencrypt-aws:

acme:

dnsChallenge:

provider: route53

email: "myname@mydomain.co.uk"

storage: "/acme/letsencrypt-aws.json"

letsencrypt-gandi:

acme:

dnsChallenge:

provider: gandiv5

email: "myname@mydomain.co.uk"

storage: "/acme/letsencrypt-gandi.json"

config/traefik/config.yml

http:

middlewares:

crowdsec-bouncer:

forwardauth:

address: http://crowdsec-bouncer-traefik:8080/api/v1/forwardAuth

trustForwardHeader: true

Caddy

Caddy is a lightweight, high performance web server and proxy server. It is much easier to configure than NginX or Traefik.

Here are a few examples in the Caddyfile ...

# proxy to a btcpay server running in a different docker network and static ssl certificate files

btcpay.mydomain.co.uk {

tls /ssl/certs/fullchain.pem /ssl/certs/key.pem

reverse_proxy generated_btcpayserver_1:49392

log {

output stdout

}

}

# proxy to a wordpress web site with custom ssl dns verification

oracle-1.mydomain.co.uk {

tls me@mydomain.co.uk {

dns route53 {

access_key_id "AKIAJxxxxxxxxxxxxxxx"

secret_access_key "xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx"

region "eu-west-2"

}

}

reverse_proxy oracle-1.mydomain.co.uk-nginx:80

log

}

... and this is the docker-compose.yml file for all that above. You do need to build your own docker image for caddy which includes the dns-route-53 plugin ...

services:

caddy:

#image: caddy:alpine

image: paully/caddy-dns-route53:latest

container_name: caddy

restart: unless-stopped

ports:

- 80:80

- 443:443

- 443:443/udp

networks:

- caddy

- generated_default

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- ./Caddyfile:/etc/caddy/Caddyfile

- ./data:/data

- ./config:/config

- ./fullchain.pem:/ssl/certs/fullchain.pem:ro

- ./key.pem:/ssl/certs/key.pem:ro

environment:

- "TZ=Europe/London"

- "AWS_ACCESS_KEY_ID=xxxxxxxxxxxxxxxxxxxxx"

- "AWS_SECRET_ACCESS_KEY=xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx"

- "AWS_REGION=eu-west-2"

networks:

caddy:

external: true

generated_default:

external: true

Do I have to restart the container after each Caddyfile change?

Caddy does not require a full restart when configuration is changed. Caddy comes with a caddy reload command which can be used to reload its configuration with zero downtime.

When running Caddy in Docker, the recommended way to trigger a config reload is by executing the caddy reload command in the running container.

First, you'll need to determine your container ID or name. Then, pass the container ID to docker exec. The working directory is set to /etc/caddy so Caddy can find your Caddyfile without additional arguments.

caddy_container_id=$(docker ps | grep caddy | awk '{print $1;}')

docker exec -w /etc/caddy $caddy_container_id caddy reload

How do I disable the Caddy admin endpoint?

Add this to the top of your Caddyfile ...

{

admin off

}

WordPress

This uses Caddy as the proxy container in the stack with WordPress FPM and MariaDB.

So, the files are all in 1 folder and on the host as a bind volume for Caddy and WordPress. The PHP is handled by Caddy and passed to WordPress PHP-FPM on the container port of 9000, thereby removing the need for the NGINX container because Caddy is doing that part. So, it's very fast!

Caddy > PHP > WordPress

example.com {

root * /var/www/html

php_fastcgi wordpress:9000

file_server

}

https://sumguy.com/wordpress-on-php-fpm-caddy-in-docker/

NGiNX Proxy Manager

Provide users with an easy way to accomplish reverse proxying hosts with SSL termination that is so easy a monkey could do it.

- Set up your host

- Add a proxy to point to the host (in Docker this will be the 'name' and the port)

- Go to http://yourhost

https://github.com/jc21/nginx-proxy-manager

Create the Docker network with a chosen subnet (used later for fixing container IP addresses)...

sudo -i docker network create --subnet=172.20.0.0/16 nginx-proxy-manager

/root/stacks/nginx-proxy-manager/docker-compose.yml

version: '3'

services:

db:

image: 'jc21/mariadb-aria:latest'

container_name: nginx-proxy-manager_db

restart: always

networks:

nginx-proxy-manager:

ipv4_address: 172.20.0.2

environment:

TZ: "Europe/London"

MYSQL_ROOT_PASSWORD: 'npm'

MYSQL_DATABASE: 'npm'

MYSQL_USER: 'npm'

MYSQL_PASSWORD: 'npm'

volumes:

- ./data/mysql:/var/lib/mysql

app:

image: 'jc21/nginx-proxy-manager:latest'

container_name: nginx-proxy-manager_app

restart: always

networks:

nginx-proxy-manager:

ipv4_address: 172.20.0.3

ports:

- '80:80'

- '81:81'

- '443:443'

environment:

TZ: "Europe/London"

DB_MYSQL_HOST: "db"

DB_MYSQL_PORT: 3306

DB_MYSQL_USER: "npm"

DB_MYSQL_PASSWORD: "npm"

DB_MYSQL_NAME: "npm"

volumes:

- ./data:/data

- ./data/letsencrypt:/etc/letsencrypt

depends_on:

- db

networks:

nginx-proxy-manager:

external: true

Reset Password

docker exec -it nginx-proxy-manager_db sh mysql -u root -p npm select * from user; delete from user where id=1; quit; exit

Custom SSL Certificate

You can add a custom SSL certificate to NPM by saving the 3 parts of the SSL from Let's Encrypt...

- privkey.pem

- cert.pem

- chain.pem

...and then uploading them to NPM.

Updating

docker-compose pull docker-compose up -d

Fix Error: Bad Gateway - error create table npm.migrations Permission Denied

https://github.com/NginxProxyManager/nginx-proxy-manager/issues/1499#issuecomment-1656997077

docker exec -it nginx-proxy-manager_db sh cd /var/lib/mysql chown -R mysql:mysql npm exit

NGiNX

Quick Container

Run and delete everything afterwards (press CTRL+C to stop it)...

docker run --rm --name test.domain.org-nginx -e VIRTUAL_HOST=test.domain.org -p 80:80 nginx:alpine

Run and detach and use a host folder to store the web pages and keep the container afterwards...

docker run --name test.domain.org-nginx -e VIRTUAL_HOST=test.domain.org -v /some/content:/usr/share/nginx/html:ro -d -p 80:80 nginx:alpine

Run and detach and connect to a specific network (like nginx-proxy-manager) and use a host folder to store the web pages and keep the container afterwards...

docker run --name test.domain.org-nginx --network nginx-proxy-manager -e VIRTUAL_HOST=test.domain.org -v /some/content:/usr/share/nginx/html:ro -d -p 80:80 nginx:alpine

Check the logs and always show them (like tail -f)...

docker logs test.domain.org-nginx -f

docker-compose.yml

version: "3"

services:

nginx:

image: nginx

container_name: nginx

environment:

- TZ=Europe/London

volumes:

- ./data/html:/usr/share/nginx/html:ro

- /etc/timezone:/etc/timezone:ro

expose:

- 80

restart: unless-stopped

With PHP

./data/nginx/site.conf

server {

server_name docker-demo.com;

root /var/www/html;

index index.php index.html index.htm;

access_log /var/log/nginx/access.log;

error_log /var/log/nginx/error.log;

location / {

try_files $uri $uri/ /index.php?$query_string;

}

# PHP-FPM Configuration Nginx

location ~ \.php$ {

try_files $uri = 404;

fastcgi_split_path_info ^(.+\.php)(/.+)$;

fastcgi_pass php:9000;

fastcgi_index index.php;

include fastcgi_params;

fastcgi_param REQUEST_URI $request_uri;

fastcgi_param SCRIPT_FILENAME $document_root$fastcgi_script_name;

fastcgi_param PATH_INFO $fastcgi_path_info;

}

}

docker-compose.yml

version: "3"

services:

nginx:

image: nginx

container_name: nginx

environment:

- TZ=Europe/London

volumes:

- ./data/html:/usr/share/nginx/html:ro

- ./data/nginx:/etc/nginx/conf.d/

- /etc/timezone:/etc/timezone:ro

expose:

- 80

restart: unless-stopped

php:

image: php:7.2-fpm

volumes:

- ./data/html:/usr/share/nginx/html:ro

- ./data/php:/usr/local/etc/php/php.ini

https://adoltech.com/blog/how-to-set-up-nginx-php-fpm-and-mysql-with-docker-compose/

With PERL

This is a way to get the IP address of the visitor (REMOTE_ADDR) displayed...

# nginx.conf; mostly copied from defaults

load_module "modules/ngx_http_perl_module.so";

user nginx;

worker_processes auto;

error_log /var/log/nginx/error.log notice;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

#perl_modules /; # only needed the hello.pm isn't in @INC (e.g. dir specified below)

perl_modules /usr/lib/perl5/vendor_perl/x86_64-linux-thread-multi/;

perl_require hello.pm;

server {

location / {

perl hello::handler;

}

}

}

# hello.pm; put in a @INC dir

package hello;

use nginx;

sub handler {

my $r = shift;

$r->send_http_header("text/html");

return OK if $r->header_only;

$r->print($r->remote_addr);

return OK;

}

1;

https://www.reddit.com/r/docker/comments/oabga4/run_perl_script_in_nginx_container/

Load Balancer

This is a simple exmaple test to show multiple backend servers answering web page requests.

docker-compose.yml

version: '3'

services:

# The load balancer

nginx:

image: nginx:1.16.0-alpine

volumes:

- ./nginx.conf:/etc/nginx/nginx.conf:ro

ports:

- "80:80"

# The web server1

server1:

image: nginx:1.16.0-alpine

volumes:

- ./server1.html:/usr/share/nginx/html/index.html

# The web server2

server2:

image: nginx:1.16.0-alpine

volumes:

- ./server2.html:/usr/share/nginx/html/index.html

nginx.conf

events {

worker_connections 1024;

}

http {

upstream app_servers { # Create an upstream for the web servers

server server1:80; # the first server

server server2:80; # the second server

}

server {

listen 80;

location / {

proxy_pass http://app_servers; # load balance the traffic

}

}

}

https://omarghader.github.io/docker-compose-nginx-tutorial/

Proxy

This is very cool and allows you to run multiple web sites on-the-fly.

The container connects to the system docker socket and watches for new containers using the VIRTUAL_HOST environment variable.

Start this, then add another container using the VIRTUAL_HOST variable and the proxy container will change its config file and reload nginx to serve the web site... automatically.

Incredible.

~/nginx-proxy/docker-compose.yml

version: "3"

services:

nginx-proxy:

image: jwilder/nginx-proxy

container_name: nginx-proxy

ports:

- "80:80"

volumes:

- /var/run/docker.sock:/tmp/docker.sock:ro

networks:

default:

external:

name: nginx-proxy

Normal

When using the nginx-proxy container above, you can just spin up a virtual web site using the standard 'nginx' docker image and link it to the 'nginx-proxy' network...

docker run -d --name nginx-website1.uk --expose 80 --net nginx-proxy -e VIRTUAL_HOST=website1.uk nginx

To use the host filesystem to store the web page files...

docker run -d --name nginx-website1.uk --expose 80 --net nginx-proxy -v /var/www/website1.uk/html:/usr/share/nginx/html:ro -e VIRTUAL_HOST=website1.uk nginx

In Docker Compose, it will look like this...

~/nginx/docker-compose.yml

version: "3"

services:

nginx-website1.uk:

image: nginx

container_name: nginx-website1.uk

expose:

- "80"

volumes:

- /var/www/website1.uk/html:/usr/share/nginx/html:ro

environment:

- VIRTUAL_HOST=website1.uk

networks:

default:

external:

name: nginx-proxy

Multiple Virtual Host Web Sites

~/nginx/docker-compose.yml

version: "3"

services:

nginx-website1.uk:

image: nginx

container_name: nginx-website1.uk

expose:

- "80"

volumes:

- /var/www/website1.uk/html:/usr/share/nginx/html:ro

environment:

- VIRTUAL_HOST=website1.uk

nginx-website2.uk:

image: nginx

container_name: nginx-website2.uk

expose:

- "80"

volumes:

- /var/www/website2.uk/html:/usr/share/nginx/html:ro

environment:

- VIRTUAL_HOST=website2.uk

networks:

default:

external:

name: nginx-proxy

Viewing Logs

docker-compose logs nginx-website1.uk docker-compose logs nginx-website2.uk

Proxy Manager

This is a web front end to manage 'nginx-proxy', where you can choose containers and create SSL certificates etc.

https://cyberhost.uk/npm-setup/

Various

https://hub.docker.com/_/nginx

https://blog.ssdnodes.com/blog/host-multiple-websites-docker-nginx/

https://github.com/nginx-proxy/nginx-proxy

Typical LEMP

https://adoltech.com/blog/how-to-set-up-nginx-php-fpm-and-mysql-with-docker-compose/

WordPress

https://hub.docker.com/_/wordpress/

PHP File Uploads Fix

Create a new PHP configuration file, and name it php.ini. Add the following configuration then save the changes.

# Hide PHP version expose_php = Off # Allow HTTP file uploads file_uploads = On # Maximum size of an uploaded file upload_max_filesize = 64M # Maximum size of form post data post_max_size = 64M # Maximum Input Variables max_input_vars = 3000

Update the docker-compose.yml to bind the php.ini to the wordpress container and then restart the WordPress container.

volumes: - ./data/config/php.ini:/usr/local/etc/php/conf.d/php.ini

WordPress Clone

Create your A record in DNS using AWS Route 53 CLI...

cli53 rrcreate domain.co.uk 'staging 300 A 123.456.78.910'

Create your docker folder for the cloned staging test web site...

mkdir -p ~/docker/stacks/staging.domain.co.uk/data/{db,html}

Edit your docker compose file, with 2 containers, making sure you use the same network as your Nginx Proxy Manager...

~/docker/stacks/staging.domain.co.uk/docker-compose.yml

version: "3"

services:

staging.domain.co.uk-wordpress_db:

image: mysql:5.7

container_name: staging.domain.co.uk-wordpress_db

volumes:

- ./data/db:/var/lib/mysql

restart: always

environment:

- TZ=Europe/London

- MYSQL_ROOT_PASSWORD=changeme

- MYSQL_DATABASE=dbname

- MYSQL_USER=dbuser

- MYSQL_PASSWORD=changeme

staging.domain.co.uk-wordpress:

depends_on:

- staging.domain.co.uk-wordpress_db

image: wordpress:latest

container_name: staging.domain.co.uk-wordpress

volumes:

- ./data/html:/var/www/html

restart: always

environment:

- TZ=Europe/London

- VIRTUAL_HOST=staging.domain.co.uk

- WORDPRESS_DB_HOST=staging.domain.co.uk-wordpress_db:3306

- WORDPRESS_DB_NAME=dbname

- WORDPRESS_DB_USER=dbuser

- WORDPRESS_DB_PASSWORD=changeme

staging.domain.co.uk-wordpress-cli:

image: wordpress:cli

container_name: staging.domain.co.uk-wordpress-cli

volumes:

- ./data/html:/var/www/html

environment:

- TZ=Europe/London

- WP_CLI_CACHE_DIR=/tmp/

- VIRTUAL_HOST=staging.domain.co.uk

- WORDPRESS_DB_HOST=staging.domain.co.uk-wordpress_db:3306

- WORDPRESS_DB_NAME=dbname

- WORDPRESS_DB_USER=dbuser

- WORDPRESS_DB_PASSWORD=changeme

working_dir: /var/www/html

user: "33:33"

networks:

default:

external:

name: nginx-proxy-manager

Start containers with correct settings and credentials for existing live web site (so that the docker startup script sets up the MySQL permissions)...

docker-compose up -d

Check the logs to make sure all is well...

docker logs staging.domain.co.uk-wordpress docker logs staging.domain.co.uk-wordpress_db

Copy the WordPress files to the host folder and correct ownership...

rsync -av /path/to/backup_unzipped_wordpress/ ~/docker/stacks/staging.domain.co.uk/html/ chown -R www-data:www-data ~/docker/stacks/staging.domain.co.uk/html/

Copy the sql file in to the running mysql container...

docker cp /path/to/backup_unzipped_wordpress/db_name.sql mysql_container_name:/tmp/

Log in to the database container...

docker exec -it mysql_container_name bash

Check and if necessary, change the timezone...

date mv /etc/localtime /etc/localtime.backup ln -s /usr/share/zoneinfo/Europe/London /etc/localtime date

Delete and create the database...

mysql -u root -p -e "DROP DATABASE db_name; CREATE DATABASE db_name;"

Import the database from the sql file, check and exit out of the container...

mysql -u root -p mysql_db_name < /tmp/db_name.sql mysql -u root -p -e "use db_name; show tables;" rm /tmp/db_name.sql exit

Edit the wp-config.php on your host server to match new DB_HOST and also add extra variables to be sure...

nano /path/to/docker/folder/html/wp-config.php define( 'WP_HOME', 'http://staging.domain.co.uk' ); define( 'WP_SITEURL', 'http://staging.domain.co.uk' );

Install WordPress CLI in the running container...

docker exec -it wordpress_container_name bash

Search and replace the original site url...

./wp --allow-root search-replace 'http://www.domain.co.uk/' 'http://staging.domain.co.uk/' --dry-run ./wp --allow-root search-replace 'http://www.domain.co.uk/' 'http://staging.domain.co.uk/'

Start your web browser and go to the test staging web site!

WordPress CLI

In your stack, set up the usual two DB + WordPress containers, then add a third services section for wp-cli...

version: "3"

services:

www.domain.uk-wordpress_db:

image: mysql:5.7

container_name: www.domain.uk-wordpress_db

volumes:

- ./data/db:/var/lib/mysql

restart: always

environment:

- MYSQL_ROOT_PASSWORD=password

- MYSQL_DATABASE=wordpress

- MYSQL_USER=wordpress

- MYSQL_PASSWORD=password

www.domain.uk-wordpress:

depends_on:

- www.domain.uk-wordpress_db

image: wordpress:latest

container_name: www.domain.uk-wordpress

volumes:

- ./data/html:/var/www/html

expose:

- 80

restart: always

environment:

- VIRTUAL_HOST=www.domain.uk

- WORDPRESS_DB_HOST=www.domain.uk-wordpress_db:3306

- WORDPRESS_DB_NAME=wordpress

- WORDPRESS_DB_USER=wordpress

- WORDPRESS_DB_PASSWORD=password

www.domain.uk-wordpress-cli:

image: wordpress:cli

container_name: www.domain.uk-wordpress-cli

volumes:

- ./data/html:/var/www/html

environment:

- WP_CLI_CACHE_DIR=/tmp/

- VIRTUAL_HOST=www.domain.uk

- WORDPRESS_DB_HOST=www.domain.uk-wordpress_db:3306

- WORDPRESS_DB_NAME=wordpress

- WORDPRESS_DB_USER=wordpress

- WORDPRESS_DB_PASSWORD=password

working_dir: /var/www/html

user: "33:33"

networks:

default:

external:

name: nginx-proxy-manager

...then start it all up.

docker-compose up -d

Then, run your wp-cli commands (e.g. wp user list) on the end of a docker run command...

docker-compose run --rm www.domain.uk-wordpress-cli wp --info docker-compose run --rm www.domain.uk-wordpress-cli wp cli version docker-compose run --rm www.domain.uk-wordpress-cli wp user list docker-compose run --rm www.domain.uk-wordpress-cli wp help theme docker-compose run --rm www.domain.uk-wordpress-cli wp theme delete --all

SSL Behind A Reverse Proxy

https://wiki.indie-it.com/wiki/WordPress#SSL_When_Using_A_Reverse_Proxy

Email Server (mailu)

Mailu is a simple yet full-featured mail server as a set of Docker images. It is free software (both as in free beer and as in free speech), open to suggestions and external contributions. The project aims at providing people with an easily setup, easily maintained and full-featured mail server while not shipping proprietary software nor unrelated features often found in popular groupware.

https://hub.docker.com/u/mailu

https://github.com/Mailu/Mailu

Postfix Admin

https://hub.docker.com/_/postfixadmin

Email Server (docker-mailserver)

https://github.com/docker-mailserver

https://github.com/docker-mailserver/docker-mailserver

https://github.com/docker-mailserver/docker-mailserver-admin

Postscreen

Postscreen is an SMTP filter that blocks spambots (or zombie machines) away from the real Postfix smtpd daemon, so Postfix does not feel overloaded and can process legitimate emails more efficiently.

The example below shows a typical spambot attempt at accessing the SMTP service and being stopped...

Jun 24 10:42:26 mail postfix/postscreen[203907]: CONNECT from [212.70.149.56]:19452 to [172.23.0.2]:25 Jun 24 10:42:26 mail postfix/dnsblog[386054]: addr 212.70.149.56 listed by domain b.barracudacentral.org as 127.0.0.2 Jun 24 10:42:26 mail postfix/dnsblog[402550]: addr 212.70.149.56 listed by domain list.dnswl.org as 127.0.10.3 Jun 24 10:42:26 mail postfix/dnsblog[407802]: addr 212.70.149.56 listed by domain bl.mailspike.net as 127.0.0.2 Jun 24 10:42:26 mail postfix/dnsblog[386155]: addr 212.70.149.56 listed by domain psbl.surriel.com as 127.0.0.2 Jun 24 10:42:29 mail postfix/postscreen[203907]: PREGREET 11 after 2.9 from [212.70.149.56]:19452: EHLO User\r\n Jun 24 10:42:29 mail postfix/postscreen[203907]: DISCONNECT [212.70.149.56]:19452

Postscreen is enabled by default but there are a few settings to tweak to get the best out of it.

Edit your data/config/postfix-main.cf file and add the following lines, making sure your Docker host IP is in bold...

mynetworks = 127.0.0.0/8 [::1]/128 [fe80::]/64 172.19.0.2/32 172.19.0.1/32 postscreen_greet_action = drop postscreen_pipelining_enable = yes postscreen_pipelining_action = drop postscreen_non_smtp_command_enable = yes postscreen_non_smtp_command_action = drop postscreen_bare_newline_enable = yes postscreen_bare_newline_action = drop

Enable and Configure Postscreen in Postfix to Block Spambots

Postgrey

SpamAssassin

SpamAssassin is controlled by Amavis (a fork of MailScanner) with the user 'amavis'.

Show Bayes Database Stats

docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --dump magic --dbpath /var/lib/amavis/.spamassassin

Learn Ham

docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --ham --progress /var/mail/mydomain.org.uk/info/cur --dbpath /var/lib/amavis/.spamassassin

Backup and Restore from Existing Mail Server

On the old server...

/bin/su -l -c '/usr/bin/sa-learn --backup > sa-learn_backup.txt' debian-spamd rsync -avP /var/lib/spamassassin/sa-learn_backup.txt user@mail.mydomain.org.uk:/tmp/

On the new server...

docker cp /tmp/sa-learn_backup.txt mail.mydomain.org.uk-mailserver:/tmp/ docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --sync --dbpath /var/lib/amavis/.spamassassin docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --clear --dbpath /var/lib/amavis/.spamassassin docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --restore /tmp/sa-learn_backup.txt --dbpath /var/lib/amavis/.spamassassin docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --sync --dbpath /var/lib/amavis/.spamassassin docker exec --user amavis mail.mydomain.org.uk-mailserver sa-learn --dump magic --dbpath /var/lib/amavis/.spamassassin

Fail2Ban

List jails...

docker exec -it mail.mydomain.org.uk-mailserver fail2ban-client status Status |- Number of jail: 3 `- Jail list: dovecot, postfix, postfix-sasl

Manually ban IP address in named jail...

docker exec -it mail.mydomain.org.uk-mailserver fail2ban-client set postfix banip 212.70.149.56

Check banned IPs...

docker exec -it mail.mydomain.org.uk-mailserver fail2ban-client status postfix Status for the jail: postfix |- Filter | |- Currently failed: 2 | |- Total failed: 2 | `- File list: /var/log/mail.log `- Actions |- Currently banned: 1 |- Total banned: 1 `- Banned IP list: 212.70.149.56

https://www.the-lazy-dev.com/en/install-fail2ban-with-docker/

Backups

Autodiscover

Create SRV and A record entries in your DNS for the services...

$ORIGIN domain.org.uk. @ 300 IN TXT "v=spf1 mx ~all; mailconf=https://autoconfig.domain.org.uk/mail/config-v1.1.xml" _autodiscover._tcp 300 IN SRV 0 0 443 autodiscover.domain.org.uk. _imap._tcp 300 IN SRV 0 0 0 . _imaps._tcp 300 IN SRV 0 1 993 mail.domain.org.uk. _ldap._tcp 300 IN SRV 0 0 636 mail.domain.org.uk. _pop3._tcp 300 IN SRV 0 0 0 . _pop3s._tcp 300 IN SRV 0 0 0 . _submission._tcp 300 IN SRV 0 1 587 mail.domain.org.uk. autoconfig 300 IN A 3.10.67.19 autodiscover 300 IN A 3.10.67.19 imap 300 IN CNAME mail mail 300 IN A 3.10.67.19 smtp 300 IN CNAME mail www 300 IN A 3.10.67.19

docker-compose.yml

services:

mailserver-autodiscover:

image: monogramm/autodiscover-email-settings:latest

container_name: mail.domain.org.uk-mailserver-autodiscover

environment:

- COMPANY_NAME=My Company

- SUPPORT_URL=https://autodiscover.domain.org.uk

- DOMAIN=domain.org.uk

- IMAP_HOST=mail.domain.org.uk

- IMAP_PORT=993

- IMAP_SOCKET=SSL

- SMTP_HOST=mail.domain.org.uk

- SMTP_PORT=587

- SMTP_SOCKET=STARTTLS

restart: unless-stopped

networks:

default:

external:

name: nginx-proxy-manager

monogramm/autodiscover-email-settings

Testing SSL Certificates

docker exec mailserver openssl s_client -connect 0.0.0.0:25 -starttls smtp -CApath /etc/ssl/certs/

Internet Speedtest

https://github.com/henrywhitaker3/Speedtest-Tracker

Emby Media Server

https://emby.media/docker-server.html

https://hub.docker.com/r/emby/embyserver

AWS CLI

docker run --rm -it -v "/root/.aws:/root/.aws" amazon/aws-cli configure docker run --rm -it -v "/root/.aws:/root/.aws" amazon/aws-cli s3 ls docker run --rm -it -v "/root/.aws:/root/.aws" amazon/aws-cli route53 list-hosted-zones

https://docs.aws.amazon.com/cli/latest/userguide/install-cliv2-docker.html

Let's Encrypt

Force RENEW a standalone certificate with the new preferred chain of "ISRG Root X1"

docker run -it --rm --name certbot -v "/usr/bin:/usr/bin" -v "/root/.aws:/root/.aws" -v "/etc/letsencrypt:/etc/letsencrypt" -v "/var/lib/letsencrypt:/var/lib/letsencrypt" certbot/certbot --force-renewal --preferred-chain "ISRG Root X1" certonly --standalone --email me@mydomain.com --agree-tos -d www.mydomain.com

Issue a wildcard certificate...

docker run -it --rm --name certbot -v "/usr/bin:/usr/bin" -v "/root/.aws:/root/.aws" -v "/etc/letsencrypt:/etc/letsencrypt" -v "/var/lib/letsencrypt:/var/lib/letsencrypt" certbot/dns-route53 certonly --dns-route53 --domain "example.com" --domain "*.example.com"

Check your certificates...

docker run -it --rm --name certbot -v "/usr/bin:/usr/bin" -v "/root/.aws:/root/.aws" -v "/etc/letsencrypt:/etc/letsencrypt" -v "/var/lib/letsencrypt:/var/lib/letsencrypt" certbot/certbot certificates

Renew a certificate...

docker run -it --rm --name certbot -v "/usr/bin:/usr/bin" -v "/root/.aws:/root/.aws" -v "/etc/letsencrypt:/etc/letsencrypt" -v "/var/lib/letsencrypt:/var/lib/letsencrypt" certbot/dns-route53 renew

If you have multiple profiles in your .aws/config then you will need to pass the AWS_PROFILE variable to the docker container...

docker run -it --rm --name certbot -v "/usr/bin:/usr/bin" -v "/root/.aws:/root/.aws" -v "/etc/letsencrypt:/etc/letsencrypt" -v "/var/lib/letsencrypt:/var/lib/letsencrypt" -e "AWS_PROFILE=certbot" certbot/dns-route53 renew

https://certbot.eff.org/docs/install.html#running-with-docker

VPN

Gluetun

Gluetun VPN client

Lightweight swiss-knife-like VPN client to tunnel to Cyberghost, ExpressVPN, FastestVPN, HideMyAss, IPVanish, IVPN, Mullvad, NordVPN, Perfect Privacy, Privado, Private Internet Access, PrivateVPN, ProtonVPN, PureVPN, Surfshark, TorGuard, VPNUnlimited, VyprVPN, WeVPN and Windscribe VPN servers using Go, OpenVPN or Wireguard, iptables, DNS over TLS, ShadowSocks and an HTTP proxy.

Connect a container to Gluetun

OpenVPN

Server

https://hub.docker.com/r/linuxserver/openvpn-as

Client

https://hub.docker.com/r/dperson/openvpn-client

Routing Containers Through Container

sudo docker run -it --net=container:vpn -d some/docker-container

OpenVPN-PiHole

https://github.com/Simonwep/openvpn-pihole

WireHole

WireHole is a combination of WireGuard, PiHole, and Unbound in a docker-compose project with the intent of enabling users to quickly and easily create and deploy a personally managed full or split-tunnel WireGuard VPN with ad blocking capabilities (via Pihole), and DNS caching with additional privacy options (via Unbound).

https://github.com/IAmStoxe/wirehole

To view a QR code, run this ...

docker exec -it wireguard /app/show-peer 1

WireGuard

Use WireHole instead!

docker-compose.yml

version: "2.1"

services:

wireguard:

image: ghcr.io/linuxserver/wireguard

container_name: wireguard

cap_add:

- NET_ADMIN

- SYS_MODULE

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

- SERVERURL=wireguard.domain.uk

- SERVERPORT=51820

- PEERS=3

- PEERDNS=auto

- INTERNAL_SUBNET=10.13.13.0

- ALLOWEDIPS=0.0.0.0/0

volumes:

- ./data/config:/config

- /lib/modules:/lib/modules

ports:

- 51820:51820/udp

sysctls:

- net.ipv4.conf.all.src_valid_mark=1

restart: unless-stopped

https://hub.docker.com/r/linuxserver/wireguard

To show the QR code

docker exec -it wireguard /app/show-peer 1 docker exec -it wireguard /app/show-peer 2 docker exec -it wireguard /app/show-peer 3

Error: const struct ipv6_stub

If you receive an error in the container logs about not being able to compile the kernel module, then follow the instructions to compile the WireGuard kernel module and tools in your host system.

https://github.com/linuxserver/docker-wireguard/issues/46#issuecomment-708278250

Force Docker Containers to use a VPN for connection

ffmpeg

docker pull jrottenberg/ffmpeg docker run jrottenberg/ffmpeg -h docker run jrottenberg/ffmpeg -i /path/to/input.mkv -stats $ffmpeg_options - > out.mp4 docker run -v $(pwd):$(pwd) -w $(pwd) jrottenberg/ffmpeg -y -i input.mkv -t 00:00:05.00 -vf scale=-1:360 output.mp4

https://registry.hub.docker.com/r/jrottenberg/ffmpeg

https://github.com/jrottenberg/ffmpeg

https://medium.com/coconut-stories/using-ffmpeg-with-docker-94523547f35c

https://github.com/linuxserver/docker-ffmpeg

MediaInfo

Install ...

sudo docker pull jlesage/mediainfo

Run ...

docker run --rm --name=mediainfo -e USER_ID=$(id -u) -e GROUP_ID=$(id -g) -v $(pwd):$(pwd):ro jlesage/mediainfo su-exec "$(id -u):$(id -g)" /usr/bin/mediainfo --help

https://github.com/jlesage/docker-mediainfo

MKV Toolnix

Install ...

sudo docker pull jlesage/mkvtoolnix

Run ...

mkvextract

docker run --rm --name=mkvextract -e USER_ID=$(id -u) -e GROUP_ID=$(id -g) -v $(pwd):$(pwd):rw jlesage/mkvtoolnix su-exec "$(id -u):$(id -g)" /usr/bin/mkvextract "$(pwd)/filename.mkv" tracks 3:"$(pwd)/filename.eng.srt"

mkvpropedit

docker run --rm --name=mkvextract -e USER_ID=$(id -u) -e GROUP_ID=$(id -g) -v $(pwd):$(pwd):rw jlesage/mkvtoolnix su-exec "$(id -u):$(id -g)" /usr/bin/mkvpropedit "$(pwd)/filename.mkv" --edit track:a1 --set language=eng

https://github.com/jlesage/docker-mkvtoolnix

MakeMKV

This will NOT work on a Raspberry Pi.

https://github.com/jlesage/docker-makemkv

Use this in combination with ffmpeg or HandBrake (as shown below) and FileBot to process your media through to your media server - like Emby or Plex..

MakeMKV > HandBrake > FileBot > Emby

To make this work with your DVD drive (/dev/sr0) you need to have the second device (/dev/sg0) in order for it to work. I don't get it, but it works.

/root/docker/stacks/makemkv/docker-compose.yml

version: '3'

services:

makemkv:

image: jlesage/makemkv

container_name: makemkv

ports:

- "0.0.0.0:5801:5800"

volumes:

- "/home/user/.MakeMKV_DOCKER:/config:rw"

- "/home/user/:/storage:ro"

- "/home/user/ToDo/MakeMKV/output:/output:rw"

devices:

- "/dev/sr0:/dev/sr0"

- "/dev/sg0:/dev/sg0"

environment:

- USER_ID=1000

- GROUP_ID=1000

- TZ=Europe/London

- MAKEMKV_KEY=your_licence_key

- AUTO_DISC_RIPPER=1

Troubleshooting

PROBLEM = "driver failed programming external connectivity on endpoint makemkv: Error starting userland proxy: listen tcp6 [::]:5800: socket: address family not supported by protocol."

SOLUTION = Put 0.0.0.0:5801 in the published ports line of docker compose to restrict the network to IPv4.

Docker Process Output

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 4a8b3106b00b jlesage/handbrake "/init" 40 hours ago Up 40 hours 5900/tcp, 0.0.0.0:5802->5800/tcp handbrake 89fe3ba8a31e jlesage/makemkv "/init" 40 hours ago Up 40 hours 5900/tcp, 0.0.0.0:5801->5800/tcp makemkv

Command Line

docker run --rm -v "/home/user/.MakeMKV_DOCKER:/config:rw" -v "/home/user/:/storage:ro" -v "/home/user/ToDo/MakeMKV/output:/output:rw" --device /dev/sr0 --device /dev/sg0 --device /dev/sg1 jlesage/makemkv /opt/makemkv/bin/makemkvcon mkv disc:0 all /output

https://github.com/jlesage/docker-makemkv/issues/141

HandBrake

This will NOT work on a Raspberry Pi.

Use this in combination with ffmpeg or MakeMKV (as shown below) and FileBot to process your media through to your media server - like Emby or Plex..

I have changed the port from 5800 to 5802 because Jocelyn's other Docker image for MakeMKV uses the same port (so I move that one as well to 5801 - see above).

To make this work with your DVD drive (/dev/sr0) you need to have the second device (/dev/sg0) in order for it to work. I don't get it, but it works.

YouTube / DB Tech - How to install HandBrake in Docker

Blog / DB Tech - How to install HandBrake in Docker

Docker HandBrake by Jocelyn Le Sage

Docker Image by Jocelyn Le Sage

/root/docker/stacks/handbrake/docker-compose.yml

version: '3'

services:

handbrake:

image: jlesage/handbrake

container_name: handbrake

ports:

- "0.0.0.0:5802:5800"

volumes:

- "/home/paully:/storage:ro"

- "/home/paully/ToDo/HandBrake/config:/config:rw"

- "/home/paully/ToDo/HandBrake/watch:/watch:rw"

- "/home/paully/ToDo/HandBrake/output:/output:rw"

devices:

- "/dev/sr0:/dev/sr0"

- "/dev/sg0:/dev/sg0"

environment:

- USER_ID=1000

- GROUP_ID=1000

- TZ=Europe/London

Docker Process Output

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 4a8b3106b00b jlesage/handbrake "/init" 40 hours ago Up 40 hours 5900/tcp, 0.0.0.0:5802->5800/tcp handbrake 89fe3ba8a31e jlesage/makemkv "/init" 40 hours ago Up 40 hours 5900/tcp, 0.0.0.0:5801->5800/tcp makemkv

Command Line

docker run --rm -v "/home/paully/:/storage:ro" -v "/home/paully/ToDo/HandBrake/config:/config:rw" -v "/home/paully/ToDo/HandBrake/watch:/watch:rw" -v "/home/paully/ToDo/HandBrake/output:/output:rw" --device /dev/sr0 --device /dev/sg0 jlesage/handbrake /usr/bin/HandBrakeCLI --input "/output/file.mkv" --stop-at duration:120 --preset 'Fast 480p30' --non-anamorphic --encoder-preset slow --quality 22 --deinterlace --lapsharp --audio 1 --aencoder copy:ac3 --no-markers --output "/output/file.mp4"

docker run --rm -v "/home/paully/:/storage:ro" -v "/home/paully/ToDo/HandBrake/config:/config:rw" -v "/home/paully/ToDo/HandBrake/watch:/watch:rw" -v "/home/paully/ToDo/HandBrake/output:/output:rw" jlesage/handbrake /usr/bin/HandBrakeCLI --input "/storage/input.mkv" --preset 'Super HQ 2160p60 4K HEVC Surround' --encoder x265 --non-anamorphic --audio 1 --aencoder copy:eac3 --no-markers --output "/output/output.mkv"

FileBot

Setup

Create your directories for data volumes (https://github.com/jlesage/docker-filebot#data-volumes) ...

mkdir -p ~/filebot/{config,output,watch}

The license file received via email can be saved on the host, into the configuration directory of the container (i.e. in the directory mapped to /config). Then, start or restart the container to have it automatically installed. NOTE: The license file is expected to have a .psm extension.

Usage

WORK IN PROGRESS

Rather than running all the time, we run the image ad-hoc with the rm option to delete the old container each time...

docker run --rm --name=filebot -v ~/filebot/config:/config:rw -v $HOME:/storage:rw -v ~/filebot/output:/output:rw -v ~/filebot/watch:/watch:rw -e AMC_ACTION=test jlesage/filebot

https://github.com/jlesage/docker-filebot

https://github.com/filebot/filebot-docker

Automated Downloaderr

This takes the hassle out of going through the various web sites to find stuff and be bombarded with ads and pop-ups.

- FlareSolverr

- Prowlarr

- Radarr + Sonarr + Bazarr

- Transmission + NZBGet

- Tdarr

FlareSolverr > Prowlarr > Radarr + Sonarr + Bazarr > Transmission + NZBGet > Tdarr

HOW TO RESTART THE RRS IN ORDER ON PORTAINER OR OMV

Stacks > Click on each one > Stop > count to 10 > Start

- WireGuard

- FlareSolverr

- Prowlarr

- Radarr

- Sonarr

ONE DAY WE WILL GET A SINGLE STACK WITH ALL THE RIGHT CONTAINERS STARTING IN THE RIGHT ORDER

https://hotio.dev/containers/autoscan/

ONE APP TO RULE THEM ALL

https://github.com/JagandeepBrar/LunaSea

GUIDE FOR DIRECTORY STRUCTURE

https://trash-guides.info/Hardlinks/How-to-setup-for/Docker/

This will enable you to automatically rename files but allow you to copy them to your actual Plex or Emby folders.

It is possible to do hard linking and let the rrrrs control all the files but I am not a fan of that.

This way, the files get renamed and moved to a 'halfway' house where you can check them and then simply move them to your desired location.

METHOD

Create the directories ...

mkdir -p /path/to/data/{media,torrents,usenet}/{movies,music,tv}

mkdir -p /path/to/docker/appdata/{radarr,sonarr,bazarr,nzbget}

Change the ownership and permissions ...

chown -R admin:users /path/to/data/ /path/to/docker/

find /path/to/data/ /path/to/docker/ -type d -exec chmod 0755 {} \;

find /path/to/data/ /path/to/docker/ -type d -exec chmod g+s {} \;

Finished directory structure ...

/srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/data/

|-- media

| |-- movies

| |-- music

| `-- tv

|-- torrents

| |-- movies

| |-- music

| `-- tv

`-- usenet

|-- completed

| |-- movies

| `-- tv

|-- movies

|-- music

|-- nzb

| `-- Movie.Name.720p.nzb.queued

|-- tmp

`-- tv

PORTAINER STACK

This is from Open Media Vault (OMV) so the volume paths are long.

This works but needs the whole VPN thing added (which changes ports etc) but for now ...

Portainer > Stacks > Add Stack > Datarr

version: "3.2"

services:

prowlarr:

container_name: prowlarr

image: hotio/prowlarr:latest

restart: unless-stopped

logging:

driver: json-file

network_mode: bridge

ports:

- 9696:9696

environment:

- PUID=998

- PGID=100

- TZ=Europe/London

volumes:

- /etc/localtime:/etc/localtime:ro

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/docker/appdata/prowlarr:/config

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/data:/data

radarr:

container_name: radarr

image: hotio/radarr:latest

restart: unless-stopped

logging:

driver: json-file

network_mode: bridge

ports:

- 7878:7878

environment:

- PUID=998

- PGID=100

- TZ=Europe/London

volumes:

- /etc/localtime:/etc/localtime:ro

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/docker/appdata/radarr:/config

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/data:/data

sonarr:

container_name: sonarr

image: hotio/sonarr:latest

restart: unless-stopped

logging:

driver: json-file

network_mode: bridge

ports:

- 8989:8989

environment:

- PUID=998

- PGID=100

- TZ=Europe/London

volumes:

- /etc/localtime:/etc/localtime:ro

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/docker/appdata/sonarr:/config

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/data:/data

bazarr:

container_name: bazarr

image: hotio/bazarr:latest

restart: unless-stopped

logging:

driver: json-file

network_mode: bridge

ports:

- 6767:6767

environment:

- PUID=998

- PGID=100

- TZ=Europe/London

volumes:

- /etc/localtime:/etc/localtime:ro

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/docker/appdata/bazarr:/config

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/data/media:/data/media

nzbget:

container_name: nzbget

image: hotio/nzbget:latest

restart: unless-stopped

logging:

driver: json-file

network_mode: bridge

ports:

- 6789:6789

environment:

- PUID=998

- PGID=100

- TZ=Europe/London

volumes:

- /etc/localtime:/etc/localtime:ro

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/docker/appdata/nzbget:/config

- /srv/dev-disk-by-uuid-7f81e7b6-1a05-4232-893b-f34c046b2bdb/data/usenet:/data/usenet:rw

OLD NOTES

Create a docker network which Jackett and Radarr share to talk to each other...

sudo docker network create jackett-radarr

...then continue setting up the containers below.

FlareSolverr

FlareSolverr is a proxy server to bypass Cloudflare protection.

FlareSolverr starts a proxy server and it waits for user requests in an idle state using few resources. When some request arrives, it uses puppeteer with the stealth plugin to create a headless browser (Chrome). It opens the URL with user parameters and waits until the Cloudflare challenge is solved (or timeout). The HTML code and the cookies are sent back to the user, and those cookies can be used to bypass Cloudflare using other HTTP clients.

Radarr > Jackett > FlareSolverr > Internet

https://github.com/FlareSolverr/FlareSolverr

https://hub.docker.com/r/flaresolverr/flaresolverr

Some indexers are protected by CloudFlare or similar services and Jackett is not able to solve the challenges. For these cases, FlareSolverr has been integrated into Jackett. This service is in charge of solving the challenges and configuring Jackett with the necessary cookies. Setting up this service is optional, most indexers don't need it.

Install FlareSolverr service using a Docker container, then configure FlareSolverr API URL in Jackett. For example: http://172.17.0.2:8191

Command line...

docker run -d \ --name=flaresolverr \ -p 8191:8191 \ -e LOG_LEVEL=info \ --restart unless-stopped \ ghcr.io/flaresolverr/flaresolverr:latest

Docker compose...

---

version: "2.1"

services:

flaresolverr:

image: ghcr.io/flaresolverr/flaresolverr:latest

container_name: flaresolverr

environment:

- PUID=1000

- PGID=1000

- LOG_LEVEL=${LOG_LEVEL:-info}

- LOG_HTML=${LOG_HTML:-false}

- CAPTCHA_SOLVER=${CAPTCHA_SOLVER:-none}

- TZ=Europe/London

ports:

- "${PORT:-8191}:8191"

restart: unless-stopped

Usage

To use it, you have to add a Proxy Server under Prowlarr > Settings > Indexers > Indexer Proxies

Testing

curl -L -X POST 'http://localhost:8191/v1' -H 'Content-Type: application/json' --data-raw '{ "cmd": "request.get", "url":"https://www.paully.co.uk/", "maxTimeout": 60000 }'

{"status":"ok","message":"","startTimestamp":1651659265156,"endTimestamp":1651659269585,"version":"v2.2.4","solution":

Jackett

Jackett works as a proxy server: it translates queries from apps (Sonarr, SickRage, CouchPotato, Mylar, etc) into tracker-site-specific http queries, parses the html response, then sends results back to the requesting software. This allows for getting recent uploads (like RSS) and performing searches. Jackett is a single repository of maintained indexer scraping and translation logic - removing the burden from other apps.

So, this is where you build your list of web sites "with content you want" ;-)

https://fleet.linuxserver.io/image?name=linuxserver/jackett

https://docs.linuxserver.io/images/docker-jackett

https://hub.docker.com/r/linuxserver/jackett

https://github.com/Jackett/Jackett

/root/docker/stacks/docker-compose.yml

---

version: "2.1"

services:

jackett:

image: ghcr.io/linuxserver/jackett

container_name: jackett

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

- AUTO_UPDATE=true

volumes:

- ./data/config:/config

- ./data/downloads:/downloads

networks:

- jackett-radarr

ports:

- 0.0.0.0:9117:9117

restart: unless-stopped

networks:

jackett-radarr:

external: true

Prowlarr

An alternative to Jackett, and now the preferred application.

https://wiki.servarr.com/prowlarr

https://wiki.servarr.com/prowlarr/quick-start-guide

https://hub.docker.com/r/linuxserver/prowlarr

https://github.com/linuxserver/docker-prowlarr

---

version: "2.1"

services:

prowlarr:

image: lscr.io/linuxserver/prowlarr:develop

container_name: prowlarr

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

volumes:

- /path/to/data:/config

ports:

- 9696:9696

restart: unless-stopped

Radarr

Radarr is a movie collection manager for Usenet and BitTorrent users. It can monitor multiple RSS feeds for new movies and will interface with clients and indexers to grab, sort, and rename them. It can also be configured to automatically upgrade the quality of existing files in the library when a better quality format becomes available.

Radarr is the 'man-in-the-middle' to take lists from Jackett and pass them to Transmission to download.

Radarr is the web UI to search for "the content you want" ;-)

https://docs.linuxserver.io/images/docker-radarr

https://github.com/linuxserver/docker-radarr

https://sasquatters.com/radarr-docker/

https://www.smarthomebeginner.com/install-radarr-using-docker/

https://trash-guides.info/Radarr/

https://discord.com/channels/264387956343570434/264388019585286144

So, you use Jackett as an Indexer of content, which answers questions from Radarr, which passes a good result to Transmission...

- Settings > Profiles > delete all but 'any' (and edit that to get rid of naff qualities at the bottom)

- Indexers > Add Indexer > Torznab > complete and TEST then SAVE

- Download Clients > Add Download Client > Transmission > complete and TEST and SAVE

/root/docker/stacks/docker-compose.yml

---

version: "2.1"

services:

radarr:

image: ghcr.io/linuxserver/radarr

container_name: radarr

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

volumes:

- ./data/config:/config

- ./data/downloads:/downloads

- ./data/torrents:/torrents

networks:

- jackett-radarr

ports:

- 0.0.0.0:7878:7878

restart: unless-stopped

networks:

jackett-radarr:

external: true

Sonarr

Sonarr (formerly NZBdrone) is a PVR for usenet and bittorrent users. It can monitor multiple RSS feeds for new episodes of your favorite shows and will grab, sort and rename them. It can also be configured to automatically upgrade the quality of files already downloaded when a better quality format becomes available.

https://docs.linuxserver.io/images/docker-sonarr

Docker Compose using a WireGuard VPN container for internet ...

---

version: "2.1"

services:

sonarr:

image: ghcr.io/linuxserver/sonarr

container_name: sonarr

network_mode: container:wireguard

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

volumes:

- ./data/config:/config

- ./data/downloads:/downloads

- ./data/torrents:/torrents

restart: "no"

UPDATE: 16 FEBRUARY 2023 / latest image is based on Ubuntu Jammy and not Alpine, which will cause a problem if your Docker version is below 20.10.10

https://docs.linuxserver.io/faq#jammy

To fix this, you can either upgrade your Docker (https://docs.docker.com/engine/install/ubuntu/#install-using-the-convenience-script) or add the following lines to your docker-compose.yml file ...

security_opt: - seccomp=unconfined

Bazarr

Bazarr is a companion application to Sonarr and Radarr that manages and downloads subtitles based on your requirements.

Docker compose file ...

---

version: "2.1"

services:

bazarr:

image: lscr.io/linuxserver/bazarr:latest

container_name: bazarr

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

volumes:

- /path/to/bazarr/config:/config

- /path/to/movies:/movies #optional

- /path/to/tv:/tv #optional

ports:

- 6767:6767

restart: unless-stopped

NZBGet

NZBGet is a usenet downloader.

You will require the following 3 things at a basic level before you are able to use usenet:-

- A usenet provider (Reddit provides a detailed Provider Map for usenet)

- An NZB indexer (NZBGeek)

- A usenet client (NZBget)

https://hub.docker.com/r/linuxserver/nzbget

https://www.cogipas.com/nzbget-complete-how-to-guide/

The Web GUI can be found at <your-ip>:6789 and the default login details (change ASAP) are...

username: nzbget password: tegbzn6789

Docker Compose file...

---

version: "2.1"

services:

nzbget:

image: lscr.io/linuxserver/nzbget:latest

container_name: nzbget

environment:

- PUID=1000

- PGID=1000

- TZ=Europe/London

- NZBGET_USER=nzbget

- NZBGET_PASS=tegbzn6789

volumes:

- /path/to/data:/config

- /path/to/downloads:/downloads

ports:

- 6789:6789

restart: unless-stopped

Tdarr

Tdarr is a popular conditional transcoding application for processing large (or small) media libraries. The application comes in the form of a click-to-run web-app, which you run on your own device and access through a web browser.

Tdarr uses two popular transcoding applications under the hood: FFmpeg and HandBrake (which itself is built on top of FFmpeg).

Tdarr works in a distributed manner where you can use multiple devices to process your library together. It does this using 'Tdarr Nodes' which connect with a central server and pick up tasks so you can put all your spare devices to use.

Each Node can run multiple 'Tdarr Workers' in parallel to maximize the hardware usage % on that Node. For example, a single FFmpeg worker running on a 64 core CPU may only hit ~30% utilization. Running multiple Workers in parallel allows the CPU to hit 100% utilization, allowing you to process your library more quickly.

Readarr

https://academy.pointtosource.com/containers/ebooks-calibre-readarr/

Unpackerr

Extracts downloads for Radarr, Sonarr, Lidarr, Readarr, and/or a Watch folder - Deletes extracted files after import.

https://github.com/Unpackerr/unpackerr

Docker Compose - https://github.com/Unpackerr/unpackerr/blob/main/examples/docker-compose.yml

Servarr (All-In-One)

This is a docker compose file which starts all the containers in the correct order. This is achieved by making the next service dependant on the previous service.

# tdarr

# unpackerr

# readarr

# bazarr

# sonarr

# radarr

# sabnzbd

# prowlarr

# flaresolverr

# wireguard

services:

tdarr:

depends_on:

- unpackerr

container_name: tdarr

image: ghcr.io/haveagitgat/tdarr:latest

restart: unless-stopped

network_mode: bridge

ports:

- 8265:8265 # webUI port

- 8266:8266 # server port

environment:

- TZ=Europe/London

- PUID=1000

- PGID=1000

- UMASK_SET=002

- serverIP=0.0.0.0

- serverPort=8266

- webUIPort=8265

- internalNode=true

- inContainer=true

- ffmpegVersion=6

- nodeName=MyInternalNode

volumes:

- ./data/tdarr/server:/app/server

- ./data/tdarr/configs:/app/configs

- ./data/tdarr/logs:/app/logs

- ./data/tdarr/transcode_cache:/temp

- /home/user/data/media/movies:/input

- /home/user/data/tdarr/movies/output:/output

- /home/user/Emby:/Emby

unpackerr:

depends_on:

- readarr

image: golift/unpackerr

container_name: unpackerr

network_mode: container:wireguard

volumes:

# You need at least this one volume mapped so Unapckerr can find your files to extract.

# Make sure this matches your Starr apps; the folder mount (/downloads or /data) should be identical.

- /etc/localtime:/etc/localtime:ro

- ./data/unpackerr/config:/config

- /home/user/data:/data

restart: "no"

# Get the user:group correct so unpackerr can read and write to your files.

user: 1000:1000

# What you see below are defaults for this compose. You only need to modify things specific to your environment.

environment:

- TZ=Europe/London

# General config

- UN_DEBUG=false

- UN_LOG_FILE=

- UN_LOG_FILES=10

- UN_LOG_FILE_MB=10

- UN_INTERVAL=2m

- UN_START_DELAY=1m

- UN_RETRY_DELAY=5m

- UN_MAX_RETRIES=3

- UN_PARALLEL=1

- UN_FILE_MODE=0644

- UN_DIR_MODE=0755

# Radarr Config

- UN_RADARR_0_URL=http://172.21.0.2:7878

- UN_RADARR_0_API_KEY=xxxxxxxxxxxxxxxxxxxxxxxxxx

- UN_RADARR_0_PATHS_0=/downloads

- UN_RADARR_0_PROTOCOLS=torrent

- UN_RADARR_0_TIMEOUT=10s

- UN_RADARR_0_DELETE_ORIG=false

- UN_RADARR_0_DELETE_DELAY=5m

# Sonarr Config

- UN_SONARR_0_URL=http://172.21.0.2:8989

- UN_SONARR_0_API_KEY=xxxxxxxxxxxxx

- UN_SONARR_0_PATHS_0=/downloads

- UN_SONARR_0_PROTOCOLS=torrent